AGI Timelines Are All Over the Map

What the disagreement says about business incentives, technical hurdles, and the meaning of “general Intelligence.”

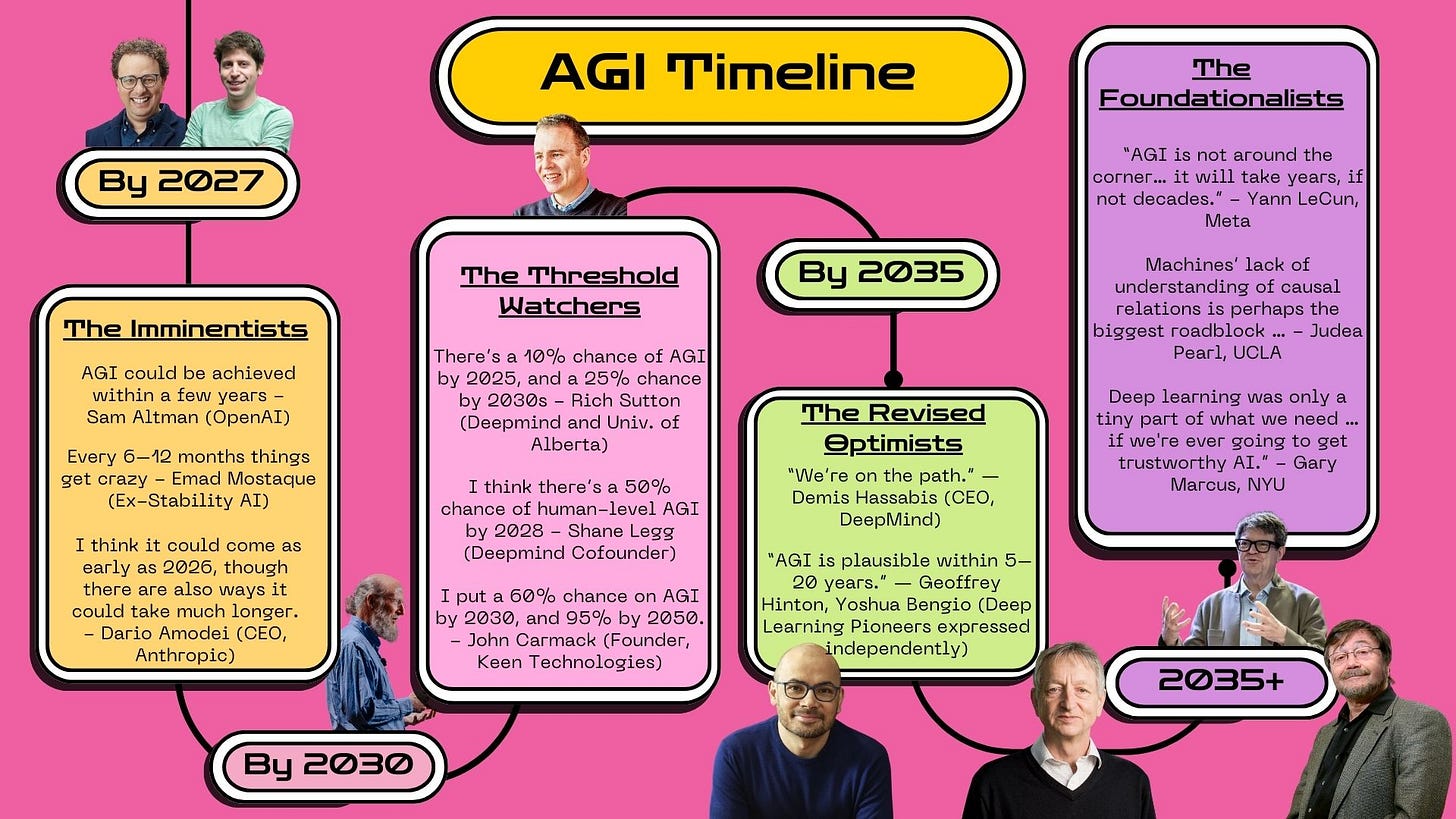

Here's what's been fascinating me over the past few years: the complete chaos in AGI timeline predictions from people who should, theoretically, have the best information. Sam Altman keeps saying AGI arrives within a couple of years. Yann LeCun pushes back hard, arguing it'll take years if not decades. Geoffrey Hinton, the 2024 Nobel Prize winner, offers a thoughtful but broad 5-to-20-year range that acknowledges the genuine uncertainty. Shane Legg puts even odds on 2028.

This isn't your typical Silicon Valley disagreement about product roadmaps. These are fundamental disagreements about reality itself. Either current AI approaches are close to a breakthrough, or they're missing something so basic that decades of additional research will be required.

What makes this particularly interesting from a business perspective is how the predictions correlate with incentives. GPT-5's lukewarm reception this month only highlighted the pattern: OpenAI had signaled another breakthrough; users found incremental improvements. Suddenly Altman's aggressive timelines looked less like insider knowledge and more like the kind of competitive positioning that makes sense when you're trying to recruit talent and justify valuations.

Yet the predictions keep coming, and the range keeps widening. To make sense of this chaos, it helps to group the predictors into four categories based not just on their timelines, but on their underlying assumptions about how AGI might be achieved.

The Imminentists: Scaling Optimists

The first group—the Imminentists—expect AGI within a couple of years, or even sooner. These are the true believers in exponential scaling, convinced that current approaches just need more compute, more data, and more clever engineering to cross the AGI threshold.

Sam Altman leads this camp, and his confident assertions about near-term breakthroughs matter more than anyone else's—not necessarily because he's right, but because he controls the most capital and talent in the space. "AGI could be achieved within a few years," he's stated repeatedly, and here's what's interesting: the timing of these predictions isn't coincidental. They align perfectly with funding rounds, talent recruiting, and competitive positioning moments.

From a business perspective, Altman's timeline optimism is brilliant regardless of technical accuracy. When you're competing with Google and Anthropic for the world's best AI researchers, conservative estimates are a luxury you can't afford. Aggressive timelines help justify enormous compute investments and maintain the kind of urgency that attracts top talent.

Emad Mostaque, formerly of Stability AI before his departure amid company turmoil, captured the Imminentist mindset with his observations about rapid technological acceleration happening in increasingly short bursts. This group sees exponential progress everywhere and interprets each model release as evidence that AGI is just around the corner. They tend to extrapolate from recent breakthroughs rather than accounting for potential scaling limitations or technical plateaus.

The Imminentist perspective makes intuitive sense if you're running an AI company trying to attract top talent and investment capital. Bold timeline predictions generate excitement, funding, and competitive urgency. Engineers want to work on projects that might achieve AGI during their careers. Investors want to fund companies that might capture the AGI market while competitors are still building infrastructure.

But the Imminentist position requires believing several things simultaneously. First, that current scaling approaches will continue delivering exponential improvements indefinitely, despite growing evidence of diminishing returns in language model performance. Second, that the gap between current AI capabilities and general intelligence is smaller than most researchers believe. Third, that no fundamental breakthroughs in architecture or training methods are required—just better execution of existing approaches.

GPT-5's incremental improvements have complicated this narrative. The model represents OpenAI's largest investment in scaling to date, yet delivered performance gains that felt more like GPT-4.1 than a true generational leap. For Imminentists, this creates a credibility problem: if scaling isn't delivering exponential improvements now, why should we expect it to suddenly accelerate toward AGI?

Dario Amodei of Anthropic occupies an interesting position within the Imminentist camp. "I think it could come as early as 2026, though there are also ways it could take much longer," he's stated, which sounds hedged but still places him in the near-term optimist category. Amodei's predictions carry particular weight because Anthropic has focused heavily on scaling challenges and safety considerations that other labs have downplayed.

The business incentives behind Imminentist predictions are obvious, but that doesn't make them wrong. Sometimes competitive pressure drives accurate forecasting—companies with the most at stake often have the best information about technical feasibility. The question is whether recent evidence supports continued exponential scaling or suggests that current approaches are reaching fundamental limits.

The Threshold Watchers: Probabilistic Pragmatists

The second group—the Threshold Watchers—take a more probabilistic approach than specific dates. They acknowledge rapid recent progress but are careful about predicting when a true threshold might be crossed, recognizing that breakthrough technologies often arrive unpredictably.

Richard Sutton, the reinforcement learning pioneer who literally wrote the textbook on the field, exemplifies this approach with probabilistic assessments that put low odds on AGI arriving by 2025 but higher chances by 2030. These aren't hedged predictions—they're probabilistic assessments that acknowledge fundamental uncertainty about breakthrough timing while still providing actionable estimates.

Shane Legg, DeepMind cofounder and one of the few people who has been thinking seriously about AGI timelines since before deep learning's resurgence, offers similar probabilistic thinking: "I think there's a 50% chance of human-level AGI by 2028." Legg's estimate carries particular weight because he's been relatively accurate about AI development timelines over the past decade.

John Carmack, founder of Keen Technologies and legendary programmer whose technical judgment spans from graphics engines to rocket propulsion, provides perhaps the most detailed probabilistic assessment: "I put a 60% chance on AGI by 2030, and 95% by 2050." The confidence interval reflects both optimism about current technical progress and humility about the uncertainty inherent in predicting breakthrough technologies.

What's interesting about the Threshold Watchers is their professional backgrounds—and their distance from the fundraising game. Sutton has spent decades working on reinforcement learning approaches that looked purely academic until recent breakthroughs made them commercially viable. Legg helped found DeepMind specifically to pursue AGI, giving him front-row seats to both progress and limitations in current approaches. Carmack has a track record of technical predictions that initially seemed impossible but proved accurate given sufficient development time.

None of these people need to hype their timelines to attract investors or talent. That independence shows in their methodology.

The Threshold Watchers tend to have deep technical backgrounds and understand both the potential and limitations of current approaches. They've seen enough AI winters and summers to know that progress isn't always linear, but they're also directly involved with research that has exceeded their own previous expectations.

Their probabilistic approach reflects a sophisticated understanding of breakthrough technologies. You can't predict exactly when a fundamental advance will occur, but you can estimate probabilities based on current technical trajectories, resource allocation, and the number of research teams working on related problems. This methodology has proven relatively accurate for other complex technological developments, from semiconductors to biotechnology.

The Threshold Watchers also tend to focus on specific technical milestones rather than general intelligence as a monolithic achievement. They understand that AGI likely involves multiple breakthroughs across different capabilities—reasoning, planning, learning, generalization—that might not arrive simultaneously. This creates both optimism about near-term progress on specific capabilities and realism about the complexity of achieving comprehensive general intelligence.

The Revised Optimists: Experience-Driven Realists

Then we have the Revised Optimists—researchers who previously anticipated much longer timelines but now suggest a window of 5 to 20 years. This group includes some of the most credentialed names in AI research, making their timeline revisions particularly significant for the broader research community.

Geoffrey Hinton, both a Turing Award winner and Nobel Laureate who literally invented many of the techniques powering current AI systems, now says "AGI is plausible within 5–20 years." This represents a dramatic revision from his previous estimates, which typically extended decades into the future. Hinton's timeline compression reflects direct experience with capabilities he didn't expect current approaches to achieve.

Yoshua Bengio, also a Turing Award recipient and one of the three "godfathers of deep learning," has expressed similar views independently. These are researchers who spent decades working on techniques that seemed purely academic until recent breakthroughs made them commercially viable and surprisingly capable.

What makes the Revised Optimists particularly credible is that their timeline compression works against their professional incentives. Academic researchers don't benefit from hype cycles or competitive positioning—if anything, they're rewarded for careful, conservative predictions that acknowledge uncertainty and limitations. When researchers like Hinton and Bengio revise their timelines upward, it typically reflects genuine surprise at technical progress rather than strategic communication.

Plus, frankly, these are the people who invented the underlying techniques. When the inventor of backpropagation starts sounding more optimistic about timelines, that's worth paying attention to.

Demis Hassabis, another Nobel Laureate and CEO of DeepMind, captures the Revised Optimist perspective in his careful phrasing: "We're on the path." It's a statement that acknowledges meaningful progress without committing to specific timelines or claiming that current approaches are sufficient. Hassabis has particular credibility because DeepMind has consistently achieved technical milestones that other researchers thought would take much longer—from game-playing AI to protein folding to mathematical theorem proving.

The Revised Optimists represent a particularly interesting category because their timeline changes reflect direct professional experience with recent AI capabilities. These researchers have been genuinely surprised by how quickly certain technical barriers fell, leading them to revise long-held assumptions about AI development while maintaining intellectual humility about remaining challenges.

Their timeline revisions also reflect a sophisticated understanding of what constitutes meaningful progress toward general intelligence. Unlike the Imminentists, who might extrapolate from any capability improvement, the Revised Optimists focus on specific technical achievements that represent genuine advances in reasoning, learning, or generalization. When they revise timelines upward, it's typically because they've observed capabilities that they previously thought were much further away.

The group's credibility comes partly from their track record of accurate technical predictions and partly from their lack of obvious business incentives to hype AI capabilities. When Nobel Prize winners who invented the underlying techniques start revising their timelines upward, the broader research community pays attention.

The Foundationalists: Architectural Skeptics

Finally, the Foundationalists take a more cautious view, arguing that current systems lack essential ingredients—such as causal reasoning, grounded perception, or architectural completeness—that are necessary for true general intelligence.

Yann LeCun represents the skeptical end of this spectrum: "AGI is not around the corner… it will take years, if not decades." His position comes from deep understanding of current AI limitations and skepticism about whether scaling alone can overcome fundamental architectural problems. LeCun's perspective is particularly interesting because he works at Meta, which has significant AGI investments, yet his academic position at NYU allows him intellectual independence from corporate messaging.

LeCun's skepticism focuses on specific technical limitations. Current language models, no matter how large or well-trained, lack understanding of physical reality, causal relationships, and persistent goal-directed behavior. They excel at pattern matching and linguistic manipulation but struggle with the kind of grounded reasoning that would be required for general intelligence.

Judea Pearl, the Turing Award winner who revolutionized causal reasoning and literally created the mathematical foundations for understanding cause-and-effect relationships, focuses on specific technical gaps: "Machines' lack of understanding of causal relations is perhaps the biggest roadblock." Pearl's timeline skepticism stems from conviction that current approaches are missing crucial capabilities that can't be solved through scaling alone.

Gary Marcus takes an even more critical stance: "Deep learning was only a tiny part of what we need … if we're ever going to get trustworthy AI." Marcus has been one of the most persistent critics of deep learning hype, consistently arguing that current approaches are fundamentally limited and that AGI will require architectural breakthroughs that the field hasn't yet achieved.

The Foundationalists see current AI progress as impressive but ultimately insufficient for true general intelligence. They argue that scaling current approaches will produce more capable narrow AI systems but won't bridge the gap to general intelligence without fundamental advances in architecture, training methods, or our understanding of intelligence itself.

What's particularly interesting about the Foundationalist position is that it's not necessarily pessimistic about AGI—it's skeptical about current approaches. Many Foundationalists believe that AGI is achievable but will require technical breakthroughs that make current timeline predictions meaningless. They're less concerned with when AGI arrives and more focused on what technical capabilities will be required to achieve it.

The Foundationalist perspective also reflects a different relationship with hype cycles and commercial pressure. Academic researchers like LeCun, Pearl, and Marcus can afford intellectual honesty about technical limitations because they're not trying to raise funding or attract talent based on AGI promises. This independence allows them to focus on fundamental technical challenges rather than optimistic extrapolations from recent progress.

What Drives the Timeline Chaos

Here's where it gets interesting from a business perspective. The patterns become clearer when you consider each group's professional context, technical background, and—most importantly—their relationship with commercial incentives. The timeline predictions aren't just technical forecasts—they're reflections of different professional perspectives on AI development, filtered through very different business realities.

The Imminentists tend to be company leaders who need to maintain competitive positioning and investor confidence. Their optimistic timelines serve business purposes while reflecting genuine belief in exponential scaling. But they're also the most exposed to bias from recent progress and competitive pressure. When you're running an AI company, conservative timeline predictions can become self-defeating prophecies—they reduce your ability to attract talent and investment relative to competitors making bolder claims.

The business logic of Imminentist predictions is straightforward. In a rapidly moving field with massive potential returns, investors and talent gravitate toward companies that might capture early market leadership. Conservative timeline predictions signal that you don't understand the technical opportunities or aren't positioned to capitalize on them. Bold predictions, even if ultimately wrong, can create competitive advantages that outlast their accuracy.

This is why I find the Revised Optimists so compelling—their timeline revisions work against their career interests, which makes them more credible signals.

The Threshold Watchers often come from academic or research backgrounds where probabilistic thinking is natural and intellectual honesty is professionally rewarded. They've seen enough technological development to understand both the potential for rapid breakthroughs and the possibility of extended plateaus. Their probabilistic approach reflects sophisticated understanding of how breakthrough technologies actually develop—with uncertain timing but predictable patterns of progress.

The Revised Optimists represent a particularly interesting group because their timeline compression reflects direct experience with recent AI capabilities rather than competitive positioning or theoretical analysis. These researchers have been genuinely surprised by how quickly certain technical barriers fell, leading them to revise long-held assumptions about AI development while maintaining intellectual humility about uncertainty.

The Foundationalists, meanwhile, focus on fundamental technical limitations rather than recent progress or business incentives. Their skepticism comes from deep understanding of what current systems can and cannot do, combined with conviction that true intelligence requires capabilities that current approaches don't address. They're less influenced by hype cycles or competitive pressure because their academic positions provide intellectual independence.

The Hidden Standards War

The timeline chaos reveals something more fundamental than disagreement about technical development speed. These predictions reflect fundamentally different views about what artificial general intelligence actually requires—different definitional frameworks that make timeline comparisons almost meaningless.

The Imminentists see AGI as an extension of current capabilities—better language models, more multimodal integration, more sophisticated reasoning within existing architectural approaches. Under this definition, AGI becomes achievable through scaling and engineering improvements rather than fundamental breakthroughs.

The Threshold Watchers acknowledge uncertainty but generally assume current approaches can reach AGI with sufficient scale, refinement, and possibly some architectural innovations. Their probabilistic assessments reflect both optimism about current technical directions and realism about the complexity of achieving comprehensive intelligence.

The Revised Optimists have been surprised by recent progress but remain cautious about extrapolating too far. Their timeline revisions reflect genuine technical optimism tempered by awareness of remaining challenges that might require years or decades to solve.

The Foundationalists argue that AGI requires architectural breakthroughs that current approaches can't deliver. Under their definition, timeline predictions based on scaling existing methods are meaningless because they're solving the wrong technical problems.

The real issue: definitional disagreement. When Altman predicts AGI in a couple of years, he's measuring against economic productivity benchmarks that current language models can already approximate. When LeCun dismisses those timelines, he's thinking about architectural completeness and causal reasoning that current systems don't possess. When Hinton revises his estimates upward, he's impressed by capabilities he didn't expect current approaches to achieve. When Sutton offers probabilistic ranges, he's accounting for fundamental uncertainty about what breakthroughs might be required.

The timeline chaos masks a deeper standards war about what counts as artificial general intelligence, with each prediction reflecting different definitional frameworks that could determine not just when AGI arrives, but what it looks like when it does.

These different definitions will determine which companies capture the AGI market, how much they're worth, and what kinds of applications actually get built.